Most founders get investor feedback for the first time in a live meeting. By then, the stakes are real and the chance to adjust is gone. The better approach: get structured feedback before you pitch, so your first real meeting starts with the hard questions already answered. AI evaluation tools now make this possible. They run a simulated investor session, connect your real data, and produce a shareable report that tells you exactly what investors will ask about first, what your strongest arguments are, and where the gaps are. Founders who arrive with this proof pre-built raise faster because investors skip the orientation phase entirely.

The market for AI in venture capital has shifted dramatically. According to Affinity's 2026 State of VC report, 85% of private capital dealmakers now use AI for daily tasks, up from 76% the prior year. On the investor side, one fund reported cutting screening time from 45 minutes to 8 minutes per company using automated scoring, which enabled reviewing 200 additional companies per month (VC Lab, 2025). AI is changing how investors work. It is also changing how founders can prepare.

This article covers what AI startup evaluation tools actually do, why structured proof matters more than polished documents, and how to use these tools to walk into your first meeting with the hard questions already answered.

What Problem Do AI Startup Evaluation Tools Solve?

The core problem is not that founders lack information. It is that investors cannot tell what is real.

A pitch deck is a curated story. Financial models are assumptions. References are hand-picked. Investors know this. Decks are screened at high velocity and the time spent per deck has compressed year over year, even as total deck interactions rose in 2024 (DocSend 2024 Funding Divide Report). The deck is not where conviction forms. It is just the door.

Conviction forms in live conversation. And here is the problem: every new investor starts that conversation from scratch. A single seed fundraise stretches across dozens of independent meetings with partners at different funds. Each one begins with the same questions. Each investor needs to independently determine whether the founder's claims hold up, because conviction cannot be transferred between funds.

A 2023 systematic review of venture capital inefficiencies framed this directly: information asymmetry between founder and investor is the core market friction, and each fund reconstructs its own map of that asymmetry in every deal (ScienceDirect, Inefficiencies of Venture Capital Funding, 2023). The system is not thorough in the sense of shared institutional rigor. It is repetitive: the same questions, re-asked, by different partners, with no conviction carrying across.

AI startup evaluation tools exist to solve this gap. They produce structured proof before the meetings begin, so the conversation starts at a higher level.

Why Paper Fails Investors

Every founder builds a data room. Deck, financials, cap table, product screenshots, maybe a competitive analysis. The problem is that none of these documents prove anything on their own.

Most decks shared with investors never make it to a serious diligence loop. They get skimmed and set aside. And among the few that do survive the filter, the static documents are rarely what closes the deal. Conviction is formed in live conversation where the partner can probe, challenge, and watch the founder react. Spreadsheets confirm; conversations decide.

Paper fails because it is static. It cannot respond to follow-up questions. It cannot show how a founder thinks under pressure. It cannot connect a revenue claim to a live Stripe dashboard or a team claim to actual LinkedIn profiles. It is a snapshot, and investors know snapshots can be staged.

According to Crunchbase's 2025 data, the average seed founder spends more than six months fundraising and repeats the same answers across 15 to 20 investor meetings. That is not a pitch problem. It is a proving problem. The founder has the answers. They just have no way to prove them once and share the proof with everyone.

What Investors Are Actually Doing in First Meetings

The first meeting is not a curiosity exercise. It is a proof mechanism.

Feedback without structure misleads. A 2025 systematic review of 344 news articles and 970 academic publications on startup valuation found that early signals snowball: each positive or negative read reinforces the next one, and momentum compounds quickly in either direction (Taylor & Francis, Snowballing Signaling Theory in Startup Valuation, 2025). That is why the first few investor conversations carry more weight than they should. If a founder arrives unprepared and gets three lukewarm reads, those reads become the starting position with investor four. Pre-pitch feedback exists to catch the loop before it starts.

The fastest path from first meeting to term sheet goes through pattern confirmation, not slide design. Investors are looking for the team signal first, because the team is the one variable that has to work for every other variable to matter. A well-designed competitive matrix cannot rescue a team the investor does not trust. A trusted team, with clean traction proof, gives investors permission to do less work and move faster.

Forum Ventures' analysis of 300 pre-seed and seed deals found that raise cycles now run 12 to 18 months for most founders, while top companies close in 3 to 6 weeks (Forum Ventures, 2025). The difference is not better decks. It is that the top companies arrive with proof already established.

The Repetition Trap

Every fund starts from zero. Even when a warm intro comes with context, the investor on the other end still needs to form their own conviction. That conviction cannot be transferred from one partner to another, or from one fund to another.

The median time to close a seed round hit 142 days in 2025, more than double the 69 days it took in 2021 (Carta State of Private Markets, 2025). The VCs who back 5 to 12 companies per year still review hundreds of pitches (NVCA 2025). Each screening conversation restarts the same diligence questions: what is the team's background, what does the traction look like, what is the go-to-market plan, why now.

A 2023 study published in the Journal of Small Business Management found that VCs use five distinct approaches to team evaluation, ranging from purely intuitive to scientific-rational, with most leaning intuitive (Journal of Small Business Management, 2023). Different investors weight different things. The same founder gets different reactions in the same week, not because the startup changed, but because each investor brings a different lens.

This is why repetition is not just annoying for founders. It is structurally inevitable. Without a pre-built proof layer, every conversation is a fresh start.

How AI Startup Evaluation Tools Create a Proof Layer

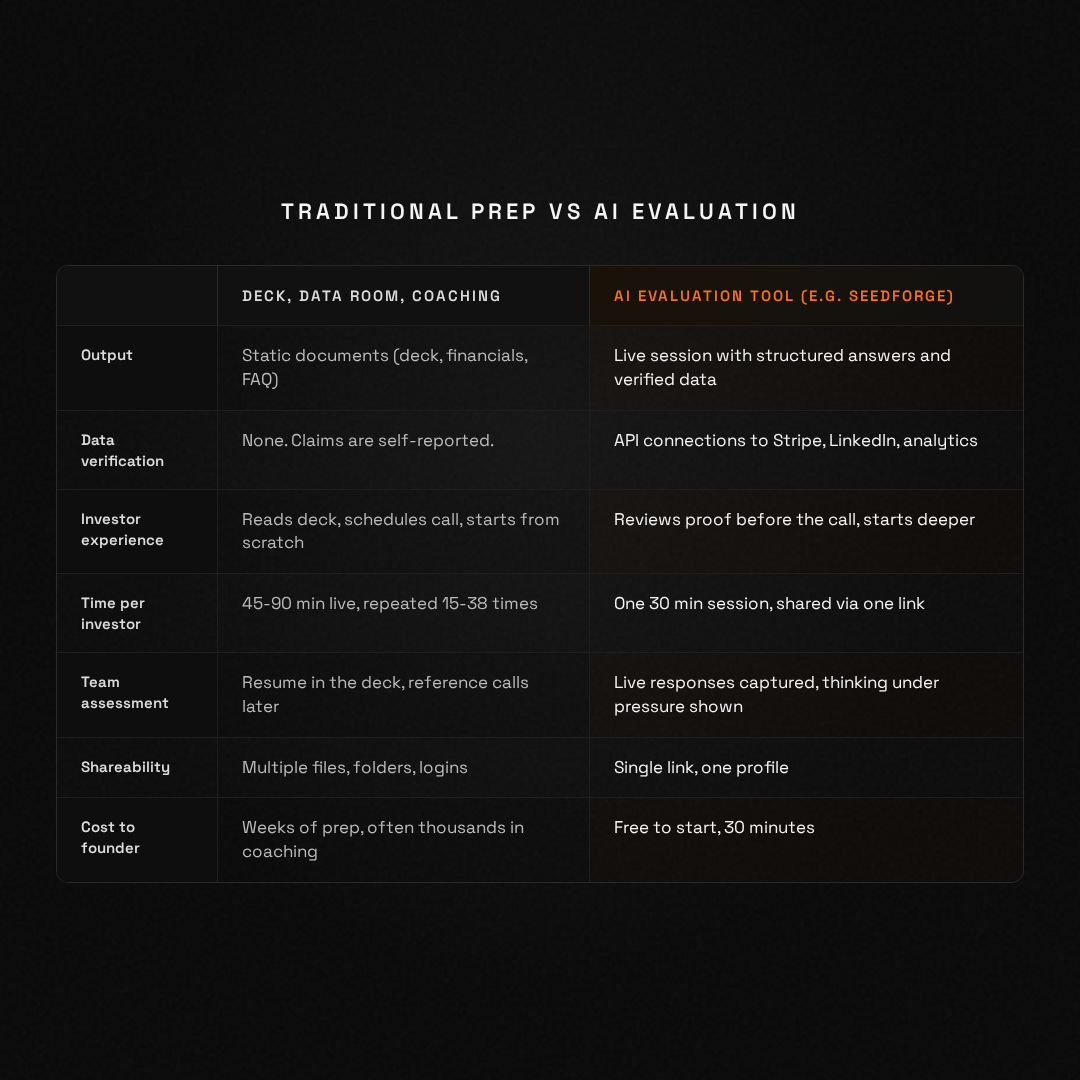

The best AI evaluation tools do three things that traditional preparation cannot.

First, they simulate the questions investors actually ask. Not generic "practice your pitch" exercises. Real diligence questions across team, market, traction, unit economics, competition, and use of funds. The founder answers live, under pressure, the way they would in an actual meeting.

Second, they connect to real data sources. A claim about revenue can be verified against a Stripe dashboard. A claim about team can be cross-referenced with LinkedIn profiles. A claim about product usage can be linked to analytics. This is what separates structured proof from a polished deck.

Third, they produce a shareable output. Instead of repeating the same answers 38 times, the founder shares one link. The investor reviews it before the call, arrives informed, and the first conversation starts deeper.

This is the specific gap SeedForge was built to close. A founder runs one 30-minute AI session before they open outreach, not after. The session plays the role of a first-meeting VC: it probes team, market, traction, competition, and unit economics, and stress-tests the answers live. The output is an honest readout of where the startup is strong and where it will get pushed. The same output becomes the Living Profile the founder shares with every investor from that point on. You can run it before your round to see what investors will see. Start free at seedforge.com.

McKinsey Global Institute's 2025 research describes the broader shift: AI is moving professional work from producing first drafts to framing questions, validating outputs, and applying judgment (McKinsey, 2025). The same principle applies here. AI handles the structured information gathering. Investors apply their judgment to what the AI surfaces.

How to Use an AI Evaluation Tool Before Your Raise

This is a practical framework for founders considering AI evaluation tools as part of their fundraise preparation.

Before the session:

Gather your real numbers. Monthly revenue, user count, churn rate, burn rate, runway. The tool will ask about these, and connected data sources will verify the claims. Do not round up. Do not estimate generously. The point is proof, not polish.

Know your weak spots. Every startup has them. The founders who close fastest do not hide weaknesses. They name them, explain why they exist, and describe what they are doing about them. An AI evaluation will surface these gaps. That is the value.

During the session:

Answer as you would in a real investor meeting. The questions cover the same ground: why this team, why this market, what is the traction, what is the competitive advantage, how will you use the capital. The session is typically 30 minutes. Your answers are captured, structured, and organized by category.

After the session:

Review your output. Look at where your answers were specific and backed by data versus where they were vague. The gaps in your output are the same gaps investors will find in your first meeting. Fix them before you start pitching.

Share the profile before the first investor meeting, not after. The goal is not to impress. The goal is to let the investor show up already past the basics. When a partner opens a structured profile before a call, the call starts in the questions the deck cannot answer. That compression is the entire value of pre-pitch feedback. Feedback you get after you have already pitched is cleanup. Feedback you get before you pitch is positioning.

What to look for in an AI evaluation tool:

Does it ask real diligence questions, or generic pitch practice prompts? Does it connect to live data sources? Does it produce a shareable output that investors can review independently? Does it cover the areas investors actually weight highest (team, traction, market, unit economics)? If the tool does not do these things, it is pitch coaching with a new label.

What Does an Investor Readiness Assessment Actually Measure?

The term "investor readiness" is used loosely across the startup ecosystem. Accelerators run readiness programs. Consultants offer readiness assessments. But readiness for what, exactly?

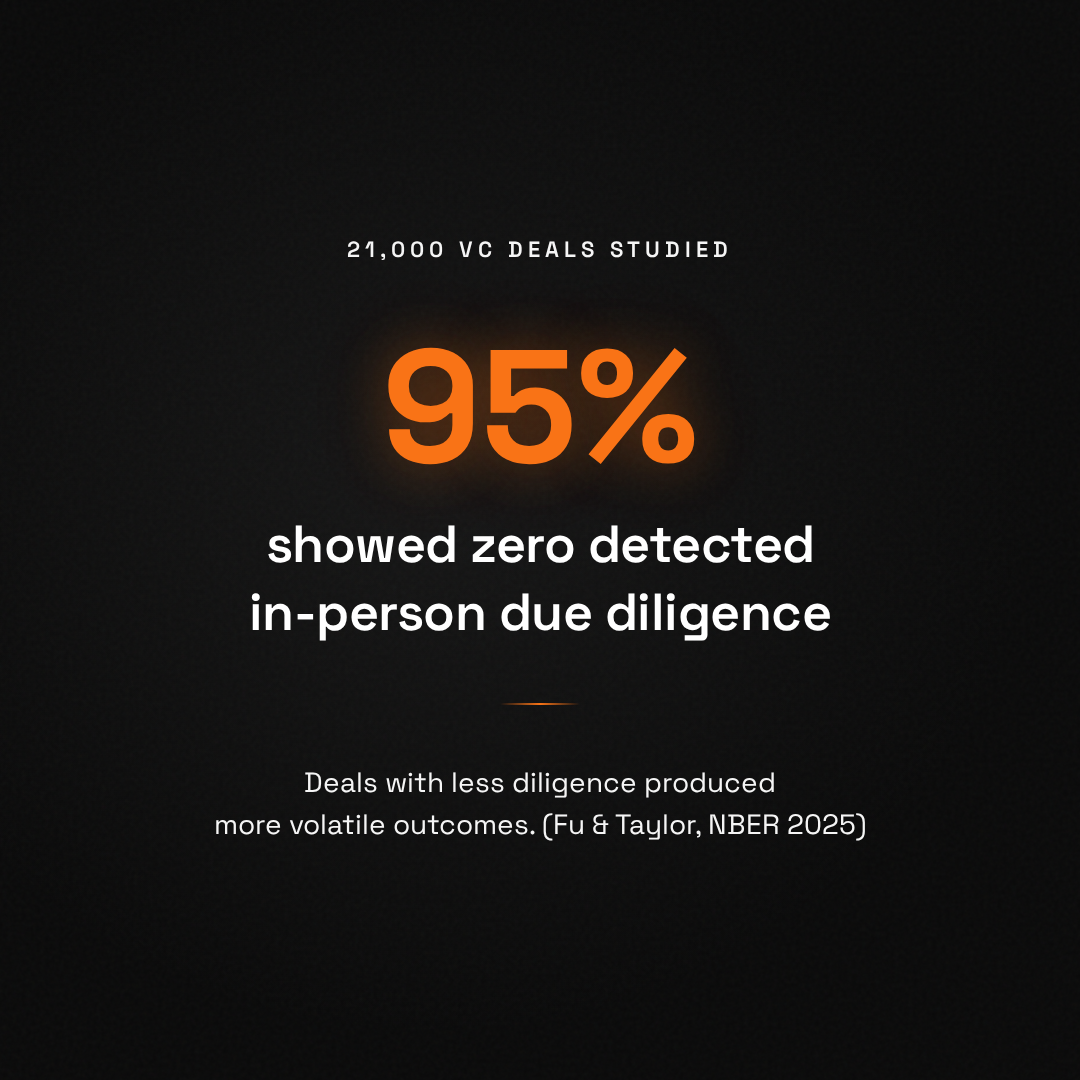

According to Harvard research, 75% of VC-backed companies fail to return capital to investors (Ghosh, Harvard). CB Insights' analysis of 431 VC-backed failures found that 43% cited poor product-market fit as the root cause (CB Insights, 2024). The issue is not that founders are unprepared for meetings. It is that many startups are not yet at the stage where investor proof exists.

A real investor readiness assessment measures whether proof exists, not whether the pitch is polished. Specifically:

Can the founder articulate what the business does in plain language? Can they name their customers and describe the buying pattern? Is there revenue, and can it be independently confirmed? Does the team have relevant experience, and can it be verified? Is the cap table clean? Is the use of funds specific and defensible?

If the answer to most of these is yes, the founder is ready. If not, no amount of pitch coaching will change the outcome. The meeting will expose the gaps regardless.

Frequently Asked Questions

What is an AI startup evaluation tool?

An AI startup evaluation tool runs a structured session covering the questions investors ask in first meetings. It captures the founder's answers, connects to real data sources like Stripe or LinkedIn for verification, and produces a shareable output. Investors review the output before the first call, which means the conversation starts deeper and moves faster toward a decision.

How is an AI evaluation different from a pitch simulator?

Pitch simulators practice delivery. AI evaluation tools produce proof. A simulator helps you rehearse your deck narrative. An evaluation tool asks real diligence questions, verifies your data against live sources, and outputs a structured document that investors can use independently. The output of a simulator is confidence. The output of an evaluation tool is a proof layer that travels without you.

Do investors actually use founder evaluation outputs?

Yes, when the output is structured and backed by verified data. Decks are scanned at high volume, with very little time per deck, which is why founders who present structured proof alongside the deck compress the meeting cadence. A proof document with verified traction data, team backgrounds, and pre-answered diligence questions gives investors what they need to move faster. The key is that the data is real, not self-reported.

When should a founder use an AI evaluation tool?

Before starting active fundraising. The best time is after the startup has real traction to show (revenue, users, partnerships) but before the first investor meeting. The evaluation surfaces gaps the founder can fix beforehand. Using it mid-raise still helps by reducing repetition across remaining meetings, but the maximum value comes from arriving at meeting one with proof already built.

What does an investor readiness assessment cost?

Costs vary widely. Traditional consulting assessments run $2,000 to $10,000. Accelerator programs include readiness components but take 7 to 14% equity. AI-powered tools like SeedForge are free to start, with a 30-minute session producing a shareable Living Profile. The cost question matters less than whether the tool produces verifiable proof that investors can use independently.

Can an AI tool replace investor judgment?

No. AI evaluation tools replace the information-gathering phase, not the judgment phase. A study of 885 VCs found that 95% rate the management team as the most important factor, and that assessment is fundamentally human (Gompers et al., 2020). What AI does is ensure investors have the structured information they need to apply that judgment efficiently, rather than spending three meetings just establishing the basics.